I realized something uncomfortable a few weeks ago:

The primary reader of my code isn’t another developer anymore.

It’s a model.

I use AI tools constantly. They load files, diff changes, regenerate functions, refactor modules, and repeatedly reread the same code. In many cases, the LLM touches my repository more often than any human does.

So I asked myself a simple question:

If machines are the dominant reader, why am I still formatting exclusively for humans?

That question led to a small experiment.

I compared two versions of my code:

- No

.prettierrc— default formatting. - A deliberately compact Prettier configuration.

Same code. Same logic. Different formatting rules.

The compact version used about 3–4% fewer tokens on average.

On its own, that number isn’t dramatic.

But the fact that formatting alone moved the needle is.

When Formatting Becomes Economic

In a traditional workflow, formatting is mostly about consistency and taste.

In an AI-heavy workflow, formatting affects how expensive your code is to read.

Tokens translate directly to:

- Cost

- Latency

- Context window limits

- Available reasoning headroom

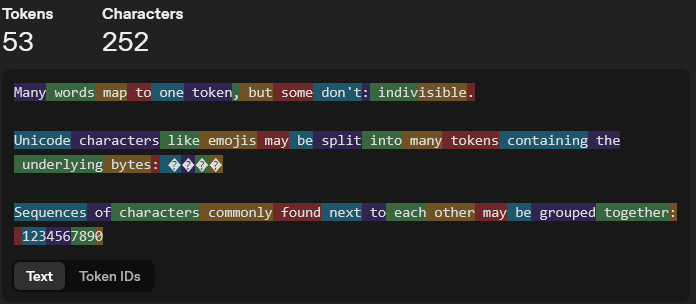

Every newline, trailing comma, unnecessary parenthesis, and wrapped paragraph increases the surface area a model has to ingest.

If the model is constantly reading your repository, that surface area compounds.

So this stopped being a style preference.

It became a resource question.

The Only Thing I Changed

I didn’t refactor logic.

I didn’t simplify functions.

I didn’t rewrite modules.

I only changed formatting rules and measured the difference.

The tighter configuration consistently reduced token usage by roughly 3–4%.

Not earth-shattering.

Not irrelevant.

Especially when that reduction applies every single time the repository is loaded into a model.

The Configuration

Here’s what produced the reduction:

{ "printWidth": 200, "tabWidth": 2, "useTabs": false, "semi": false, "singleQuote": true, "quoteProps": "as-needed", "trailingComma": "none", "bracketSpacing": false, "bracketSameLine": true, "arrowParens": "avoid", "proseWrap": "never", "htmlWhitespaceSensitivity": "ignore"}

In practice this means:

- Longer lines → less hidden new line characters

- No semicolons where they aren’t required

- No trailing commas

- Tighter object spacing

- No parentheses for single-argument arrow functions

- No hard-wrapped markdown

Nothing exotic.

Just less ceremony.

Dense, Not Hostile

Could I reduce tokens further?

Yes.

I could aggressively compress whitespace. Collapse more expressions. Even minify the repository before AI ingestion.

That would save additional tokens.

It would also make the code painful more me to read and maintain.

There’s a clear boundary between efficiency and sabotage.

The goal isn’t maximum compression.

The goal is higher signal per token while remaining human-readable.

This configuration sits in that middle ground.

Machines Mirror Their Input

There’s another effect I didn’t expect at first.

AI tools tend to mirror the structure they observe.

If your codebase is vertically bloated, they will generate vertically bloated code.

They’ll happily write:

if (condition) { return true} else { return false}

Instead of:

return condition

When compact structure is normal, verbose expansions look out of place.

Formatting becomes subtle behavioral guidance for the model.

Why This Matters

This isn’t about repository size.

It’s about how Codex actually works.

When you’re in codex sessions, the model repeatedly rereads the same files. It pulls them into context. It refreshes them after edits. It regenerates against them. The same code gets ingested again and again.

That means token usage isn’t a one-time cost. It’s a recurring cost.

And right now, usage isn’t unlimited. There are five-hour caps. Weekly caps. If you push too hard in a short window, you get blocked.

So efficiency isn’t theoretical — it directly affects throughput.

If I reduce token load by 3–4%, that doesn’t just shrink a single request. It stretches my usage window. It increases how much iteration I can get done before hitting limits.

In practical terms, that means potentially more work inside the same cap.

When you’re using these tools heavily, and get blocked you come up with ideas like this.

More broadly, this highlights a shift:

Repositories are no longer written solely for humans. They’re shared reasoning surfaces between humans and machines.

We’ve optimized for CPU cycles. We’ve optimized for bundle size. We’ve optimized for query performance.

Now we’re starting to optimize for token flow.

Restyling my code for machines didn’t rewrite my logic..

It just acknowledged who is becoming the primary reader.

Leave a comment